[PHOTOS and VIDEO COMING SOON]

Overview

Punch Me, I Dare You is a game that Tony Lim and I worked on for our ICM final. It uses a hacked Kinect, Processing, and MaxMSP. Download the full game here.

The Game

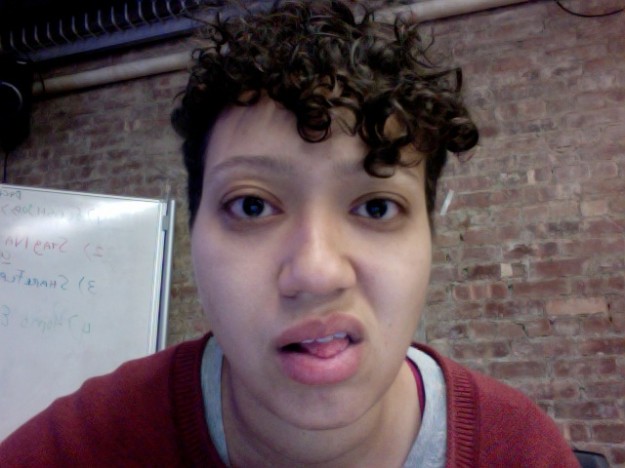

It’s a stress-relieving game that encourages the player to punch areas of a face on screen (See my teasing face above). There are five areas: left eye, right eye, nose, left cheek, and right cheek. Each area must accumulate a certain amount of damage through punching before the player can move to the next area. A short clip of high-energy music plays each time a punch is landed, and the music progresses as the player progresses through the game. The game is timed, encouraging the player to want to beat their own time.

Here Tony is testing out the game.

Technicalities

I am using skeletal tracking through the SimpleOpenNI library for Processing. I am able to track the player’s hands in 3 dimensional space. When either hand passes through the current target area, the “damage” variable increases until it’s time to load the next area.

The trickiest part was figuring out how to program what counts as a punch.

2D punches: Many of the examples I found using hands worked in 2D and drew a line where the hand was being tracked. I began writing this game with 2 dimensional punches where the hands were tracked on the x and y axises. The player, essentially, had to swipe across the area, side to side. To avoid the damage count from growing when a player’s hand stayed inside the target area, I made it so that the hand had to enter the area and leave it, in order for a punch to count as one punch.

During user testing, it was clear that people immediately started punching forward, not realizing that the game only tracked motion in 2D, meaning side to side. In the game, I placed images of boxing gloves over the player’s hands. The gloves give clear instruction to the player as to what they’re supposed to do: punch. That’s great for what we were trying to accomplish in communicating a game environment, but the programming didn’t support the same instructions. Had I placed knives in the hands instead of gloves, maybe players would be more inclined to slash across the target areas.

Punching through z-index: Based on this user feedback, I tried using the z-index information from the hands’ position to create a more 3D feel in punching. The idea was that in order for a punch to count, it would have to fall within the x and y range of the target area and have to pass a certain z-index.

The problem with this method was figuring out the appropriate z-index threshold. It would mean telling the player to stand at an exact distance from the Kinect sensor so that their hand could pass the threshold when they threw a punch. Also, every person has different arm lengths, so it would mean adjusting this threshold for each player. Because of these technicalities, the users would be much more aware of the mechanics behind the game. But we really wanted them to play, punch, release some stress, and see the damage. We didn’t want the players to focus too much on how they are supposed to stand or punch. So making the punches easy and natural was important.

Biomechanics of a punch: My next idea for programming a punch was around using information from the joints themselves in relation to each other, instead of using the hands position in space. If I could figure out if the hand, elbow, and shoulder were in a line (or close to a line) on the same arm and pointing to the target area, this would ideally be a more natural punch for our game environment.

Unfortunately, I didn’t have time to fully explore this direction as our deadline for the final was approaching. We moved forward using the 2D punches and watched people flail their arms at the screen.

The music programming is handled through MaxMSP. As we pass it the value of the “damage,” it plays the next music clip. The idea is that by the end of the game, the player will have heard the whole song.

Related Post: Kinect Game Progress